Gabriel Aguiar Noury

on 15 January 2023

The Robot Operating System (ROS) has been powering innovators for the last decade. But as more and more ROS robots are reaching the market, developers face new challenges deploying their applications. Why did we start using ROS & Docker? Is it convenient? Does it solve our challenges? Or is it simply a tool from another domain that we are trying to incorporate into an entirely different and possibly inappropriate field?

Docker containers were designed for the elastic domain, for elastic operations, for the clouds. As such, using Docker has several flaws and gaps when it comes to robotics or even IoT devices. We aim to flag those limitations for raising awareness among developers.

We explore all of the points below in more detail in our whitepaper Docker & ROS: When all you have is a hammer, everything looks like a nail.

ROS Docker – access to the host and resources

Docker’s most significant flaw in robotics applications is its ability to access the host disc and privileged resources like GPIO pins.

Docker misses dedicated, high-level interfaces for accessing low-level hardware. It does not include easy to understand mechanisms to provide access to host resources or permissions. So today, developers need to set up this communication by manually providing specific, fine-grained privileges to a container.

For instance, on a Linux host, to get access to the GPIO pins, one must use the –device flag to provide access to all of the /dev/gpio<num> device nodes. This requires identifying all of the gpio device nodes and, in a more general case, identifying and auditing every access that an application needs. Because this task is difficult, many developers fall back to running their Docker container with the “–privileged” option. This way, developers are exposing much more of the host OS to the container and effectively are negating all sandboxing for their container when doing so. That’s why it is highly recommended to not run privileged containers.

Running a container with a privileged flag removes the protective sandbox for the container running on your robot. As the privileged flag is used to access the PID of the host from the container, an attacker having an initial foothold on the container can escape from the container environment and access the host machine with root privilege.

Challenges for ROS Docker managing networking

When you’re deploying on devices things get really difficult to manage with Docker compose and setting up networking. Simply put, with Docker it is significantly more difficult to coordinate traditional mechanisms for configuring complex bits of software together.

To talk with each other, two containers can communicate through networking or sharing files on disk. Communicating through networking allows containers to send and receive requests to other applications by exposing ports. If they are sharing files on disk, Docker containers communicate by reading and writing files.

But since there are no predefined interfaces, you have to set up bridges, virtual networks, and hostnames to get one Docker container to talk to another. If you are using the host disk, you will run with the same struggles as mentioned in the previous section. Docker just gives you another abstraction layer in your own machine.

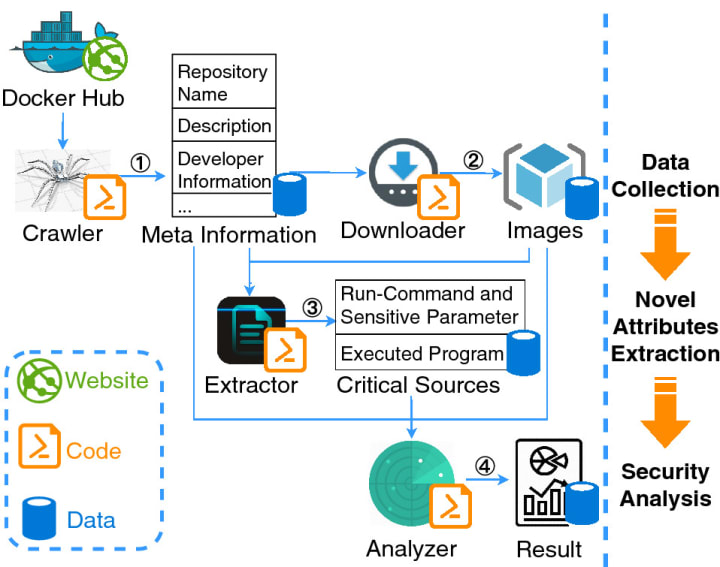

Docker security maintenance challenges increase on deployment

Implementing security maintenance protocols is a problem, with or without Docker. Scanning, identifying and building patches for vulnerabilities is time-consuming and work-intensive. But this is a barrier we all need to address (Learn more about security maintenance for ROS).

However, with Docker, developers do not get notifications of when a security update has landed or a CVE fix has been made available for Debian packages used in their container. Unlike other containers such as Snaps, Docker doesn’t come with a free notification centre that alerts developers when an update is available. You’re on the hook to know when you need to update things, or you will have to pay a monthly subscription for using a third party notification centre.

As a result, developers leave handling these security updates.

As Docker specifies “it is up to individual users to determine whether or not a CVE applies to how you are running your service“. This brings us back to having to take meaningful and impactful responsibility without having a security background.

Without a free and active out-of-the-box notification centre, and support for fixing these security issues, ROS Docker users will end up deploying solutions to the field that are a risk to your users.

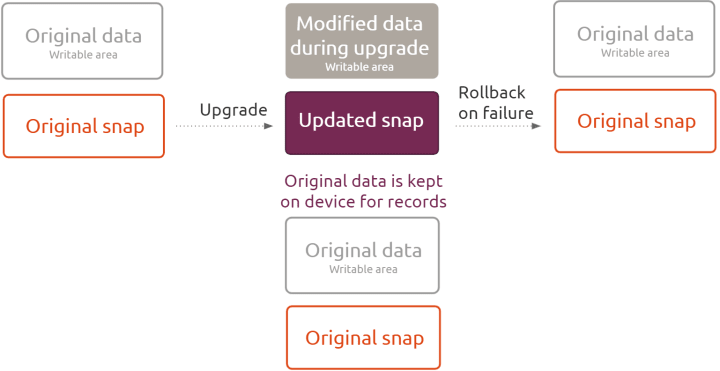

No transactional updates for ROS on Docker

What happens if an update fails? If your robot runs out of battery during an update? Or simply there is a bug. This recently was the case for IRobot, Roomba manufacturer. A firmware update caused navigation issues to their devices and a fix took several weeks to roll out worldwide.

The ability to automatically roll back to previous versions is extremely important for device manufactures. But also the ability to keep the integrity of all your data. That’s something that you don’t get with Docker containers. With Docker, you have two scenarios, either it fully works with the new version of the software, or it doesn’t work and you are back where you started before. That’s why developers end up stuck with data from the new version that needs to be manually migrated back to the old version before rolling back.

In the same way, all of the files that the previous version had available to it are destroyed. So if you have a database and the database gets corrupted while you’re trying to update, there is no safety mechanism to make a backup before the update.

No delta updates for ROS Docker applications

Bandwidth is a constraint for robots, and there is also a cost associated with this. Depending on your application, more than frequent, robots have limited internet connections and data plans so saving bandwidth on updates is crucial. Logically, heavy updates also impact power consumption.

That’s why we have delta packages; an update that only requires the user to download the code that has changed, not the whole program. So what if your package is 500 MB, but a delta between two revisions can be just 20 MB? That’s a huge difference in terms of efficiency and bandwidth costs. Delta updates reduce bandwidth requirements by more than 95%. (Learn more about how our delta updates have helped Cyberdyne)

But docker does not have delta updates. So if you deployed with docker, you must think about the cost that your updates will represent.

Docker fleet management is a challenge

Docker is good for ephemeral workloads. Think of it like a serverless process, where you hack some code up and you just go and run in the cloud once and get the answer. You don’t need to give a name to that container. Why would you? It’s like going to the shell, registering the name for what I’m about to do, type a command and then request to run your register. Docker containers save developers from doing that.

But for fleet management, you need to know the state of your device. If it’s running the latest software, if you are testing a fix with a cluster of devices, or for operational reasons, you need to have an identification of the machine. Docker does not provide that identification with the machine out of the box. There is a separation between the process and the actual machine because in the cloud I don’t care if I have 20 machines and which is hosting that process. For fleet management, you care.

People might think that Kubernetes is the solution. But it is not. Kubernetes is successfully used in container-orchestration systems for automating computer application deployment, scaling, and management. It is open source, and widely used to support data centre outsourcing to public cloud service providers or can be used for web hosting at scale. Kubernetes is not a solution for fleet management. Kubernetes is designed for an aggregate of compute. Robotics fleet management is not an aggregate of compute. It’s the exact opposite of that.

- Docker is not enough for you? Explore the best way of deploying robotics applications with snaps

- Discover how to migrate your docker application to snaps

- Do you want to learn more about Docker and robotics? Read our whitepaper Docker & ROS: When all you have is a hammer, everything looks like a nail