Canonical

on 26 September 2023

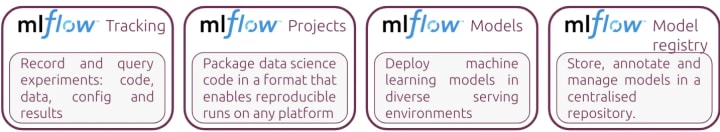

London, United Kingdom, 26 September 2023. Canonical announced today that Charmed MLFlow, Canonical’s distribution of the popular machine learning platform, is now generally available. Charmed MLFlow is part of Canonical’s growing MLOps portfolio. Ideal for model registry and experiment tracking, Charmed MLFlow is integrated with other AI and big data tools such as Apache Spark and Kubeflow. The solution runs on any infrastructure, from workstations to public and private clouds. Conveniently, it is offered as part of Canonical’s Ubuntu Pro subscription and priced per node, with a support tier available. It comes with extensive developer features and a ten-year security maintenance commitment.

Simplified deployment from workstations to any infrastructure

Charmed MLFlow can be deployed on a laptop within minutes, facilitating quick experimentation. It is fully tested on Ubuntu and can be used on other operating systems through Canonical’s Multipass or Windows Subsystem for Linux (WSL).

“MLFlow has become the leading AI framework for streamlining all ML stages. Its popularity arises from its flexibility in facilitating modest local desktop experimentation and extensive cloud deployment, catering to both individual and enterprise needs”, said Cedric Gegout VP Product Management at Canonical. “This made Charmed MLFlow a fitting addition to our Canonical MLOps suite, offering cost-effective solutions that enable developers to start small and scale up as their business grows, without the typical ML infrastructure hassle and with a simple Ubuntu Pro subscription”.

Charmed MLFlow has model registry capabilities, enabling professionals to store, annotate and manage models in a centralised repository. This brings order to the machine learning development phase and provides visibility on the status of all experiments performed, including results, changes made and possible configurations.

Charmed MLFlow runs on any environment, public or private cloud, and supports hybrid and multi-cloud scenarios. Charmed MLFlow works on any CNCF-conformant Kubernetes distribution, such as MicroK8s, Charmed Kubernetes or EKS. Data scientists can move their models from laptops to their infrastructure of choice, using the same tooling. This allows for a seamless migration between clouds, enabling professionals to benefit from the computing power they need for their use case.

Automated lifecycle management and integrations

Charmed MLFlow benefits from improved lifecycle management, for easy upgrades and updates. In addition to the upstream capabilities, Canonical’s distribution automates these tasks and enables users to easily perform them.This reduces time spent on operations and takes away the burden of library, framework and tool incompatibility. The solution also integrates seamlessly with other machine-learning tools.

Charmed MLFlow can be deployed standalone and integrated with tools such as Jupyter Notebook, Charmed Kubeflow and KServe. Additionally, it includes infrastructure monitoring through Canonical Observability Stack (COS). When combined with Charmed Kubeflow, users can tap into other features like hyper-parameter tuning, GPU scheduling or model serving.

Enterprise-ready ML projects with secure and supported tooling

Charmed MLFlow benefits from security patching through Canonical’s Ubuntu Pro subscription. Customers get timely patches for common vulnerabilities and exposures (CVEs) and a ten-year security maintenance commitment. Ubuntu Pro also provides hardening features and compliance with standards like FedRAMP, HIPAA and PCI-DSS, which are ideal for enterprises running AI/ML workloads in highly regulated environments.

Besides security patching, enterprises can get 24/7 support for Charmed MFlow deployment, uptime monitoring, bug-fixes and operations. For organisations that lack internal expertise in machine learning infrastructure but aim to jumpstart their efforts, Canonical offers managed services.

Learn about Charmed MLFlow and generative AI at Canonical’s AI roadshow

The upcoming Canonical AI Roadshow, which starts on 21 October 2023, will showcase how Canonical can help enterprises speed up their AI journeys. A lineup of presentations, talks, demos, interviews and case studies will focus on the latest trends in generative AI, and the critical role of open source in driving innovation in this space.

Browse the full line-up or contact us to learn more.